|

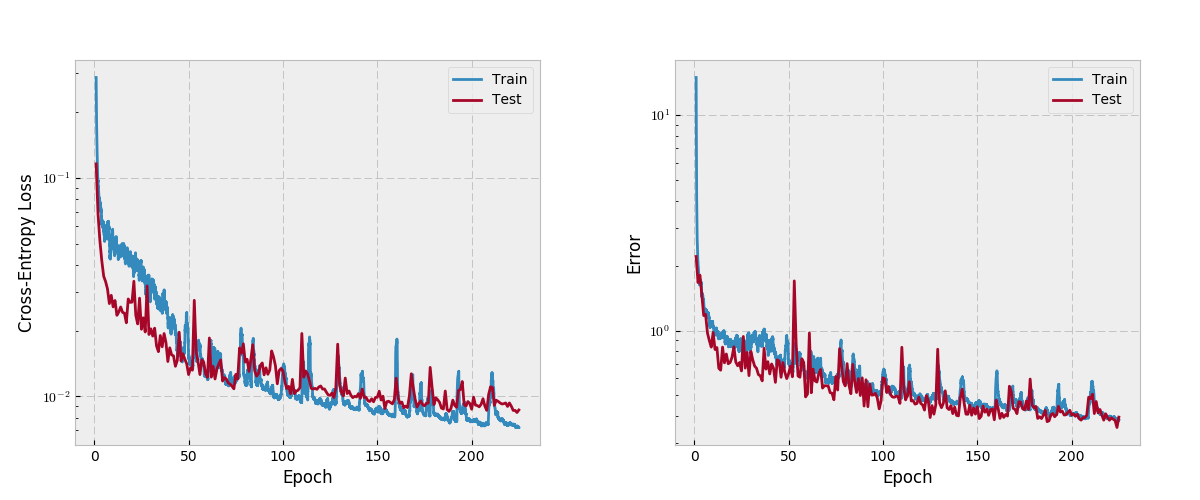

3/16/2024 0 Comments Pytorch cross entropy loss This is accomplished by WeightedRandomSampler in PyTorch, using the same aforementioned weights.

Thanks to we can also use class over-sampling, which is equivalent to using class weights. Is this the right approach to begin with or are there other / better This is only a re-scaling, the relative weights are the same. You can also use the smallest class as nominator, which gives 0.889, 0.053, and 1.0 respectively. How to read the predicted label of a Neural Netowork with Cross Entropy Loss Pytorch I am using a neural network to predict the quality of the Red Wine dataset, available on UCI machine Learning, using Pytorch, and Cross Entropy Loss as loss function. Cross-Entropy < 0.02: Great probabilities. Weight of class $c$ is the size of largest class divided by the size of class $c$.įor example, If class 1 has 900, class 0, and class 3 has 800 samples, then their weights would be 16.67, 1.0, and 18.75 respectively. Some intuitive guidelines from MachineLearningMastery post for natural log based for a mean loss: Cross-Entropy 0.00: Perfect probabilities.

This would need to be weighted I suppose? How does that work in practice? It is the first choice when no preference is built from domain knowledge yet. What kind of loss function would I use here?Ĭross-entropy is the go-to loss function for classification tasks, either balanced or imbalanced.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed